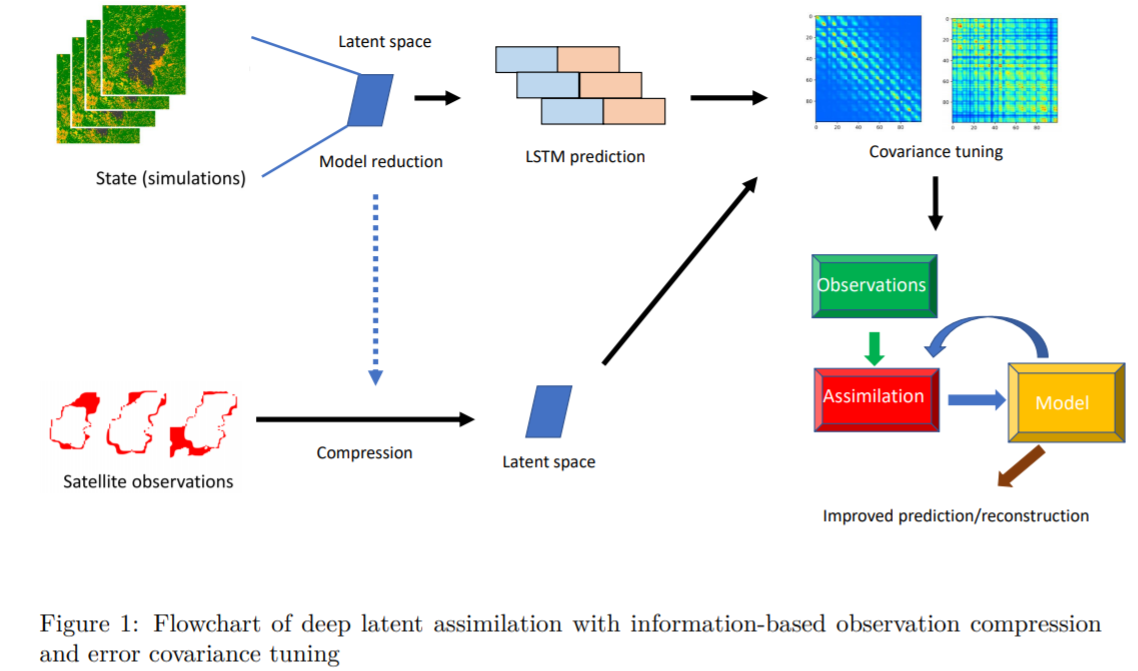

Real-time forecasting of wildfire dynamics which raises increasing attention recently in fire safety science world-widely, is extremely challenging due to the complexities of the physical models and the geographical features. Running physics-based simulations for large-scale wildfires can be computationally difficult, if not infeasible. In this work, we propose a novel algorithm scheme, which combines reduced-order modelling (ROM), recurrent neural networks (RNN), data assimilation (DA) and error covariance tuning for real-time forecasting/monitoring of the burned area. In this work, we make use of an operating cellular automata (CA) simulator to generate a dataset used to train a data-driven surrogate model for forecasting fire diffusions. More precisely, based on snapshots of fire diffusion simulations, we first construct a low-dimensional latent space via proper orthogonal decomposition (POD) or convolution autoencoder (CAE). A long-short-term-memory (LSTM) neural network is then used to build sequence-to-sequence predictions following the simulation results projected/encoded in the latent space. In order to adjust the prediction of burned areas, satellite data is assimilated in the data-driven surrogate model. A latent DA coupled with an error covariance tuning algorithm is performed with the help of daily observed satellite wildfire images as observation data. Thanks to ROM, the computational complexity of DA is also considerably reduced.

The framework currently proposed in this study can be easily applied to other spatial-temporal dynamics such as air pollution monitoring or environmental epidemiology. Future work can be considered to, for instance, extend the current approach to 3D systems with unstructured meshes or inhomogeneous time steps.